The EU is preparing to enforce a law that could bankrupt platforms for failing to label AI-generated content, but the industry is ignoring the compliance tools already built to prevent it.

Alberto Betella, CTO of RSS.com, defines the core threat as 'AI slop' - synthetic content designed to harvest programmatic ad revenue while posing as human. He warns that a fake 'Dr. XYZ' persona giving medical advice could shatter listener trust and endanger lives. The EU AI Act, effective August 2, 2026, mandates disclosure for synthetic content of public interest, with fines hitting €15 million or 3% of global turnover.

"If the host and script are synthetic, disclosure must be mandatory to maintain advertising integrity."

- Alberto Betella, Podnews Weekly Review

Hosting companies RSS.com and Spreaker have implemented voluntary AI disclosure tags in RSS feeds, covering about 15% of new episodes. Betella argues these tags are the first line of defense, allowing platforms and advertisers to filter content. Yet major players like Libsyn are signaling a contradictory pivot, now offering 100GB of video storage while keeping audio storage as low as 540MB - chasing Spotify's video API instead of building transparency.

The liability for AI content is expanding beyond copyright to criminal law. Florida Attorney General James Uthmire has opened a criminal probe into OpenAI after chat logs revealed a mass shooter consulted ChatGPT over 200 times to plan an attack at Florida State University. Uthmire argues that if a human provided the specific tactical details the bot did - ammunition types, optimal weapon, timing for maximum casualties - they would be charged as an accomplice to murder.

"If a corporation is a person for tax and speech purposes, it should be a person for criminal culpability."

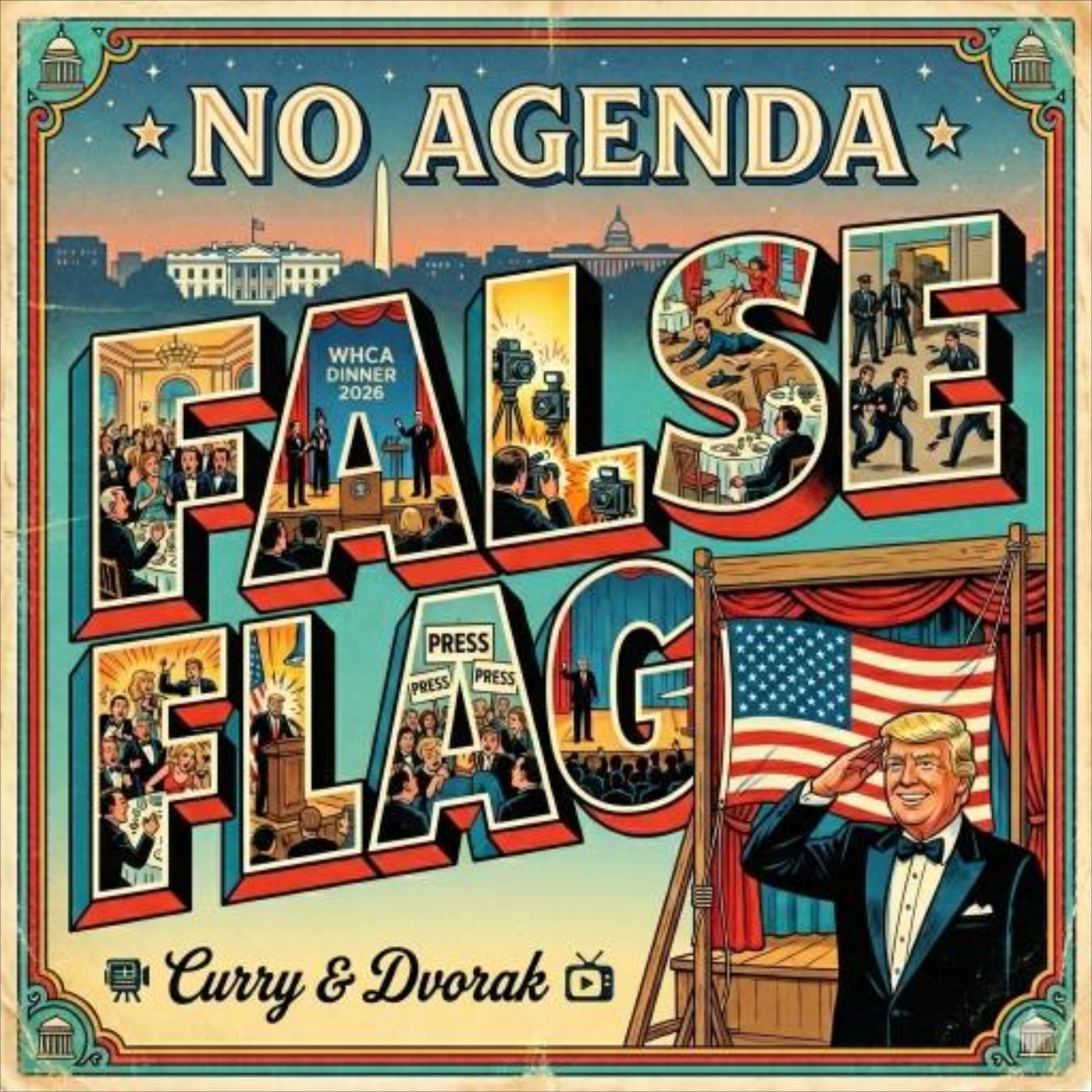

- Adam Curry, No Agenda Show

Meanwhile, the technical foundation of AI tools is becoming less stable for developers. Mario Zechner built the minimalist coding agent Pi after Claude Code became unreliable, with system prompts and tool definitions changing with every release. This instability mirrors the broader content ecosystem: a rush toward new features without the guardrails needed for safety or compliance. As platforms ignore disclosure tags and chase video, they are building the liability trap the EU is about to spring.